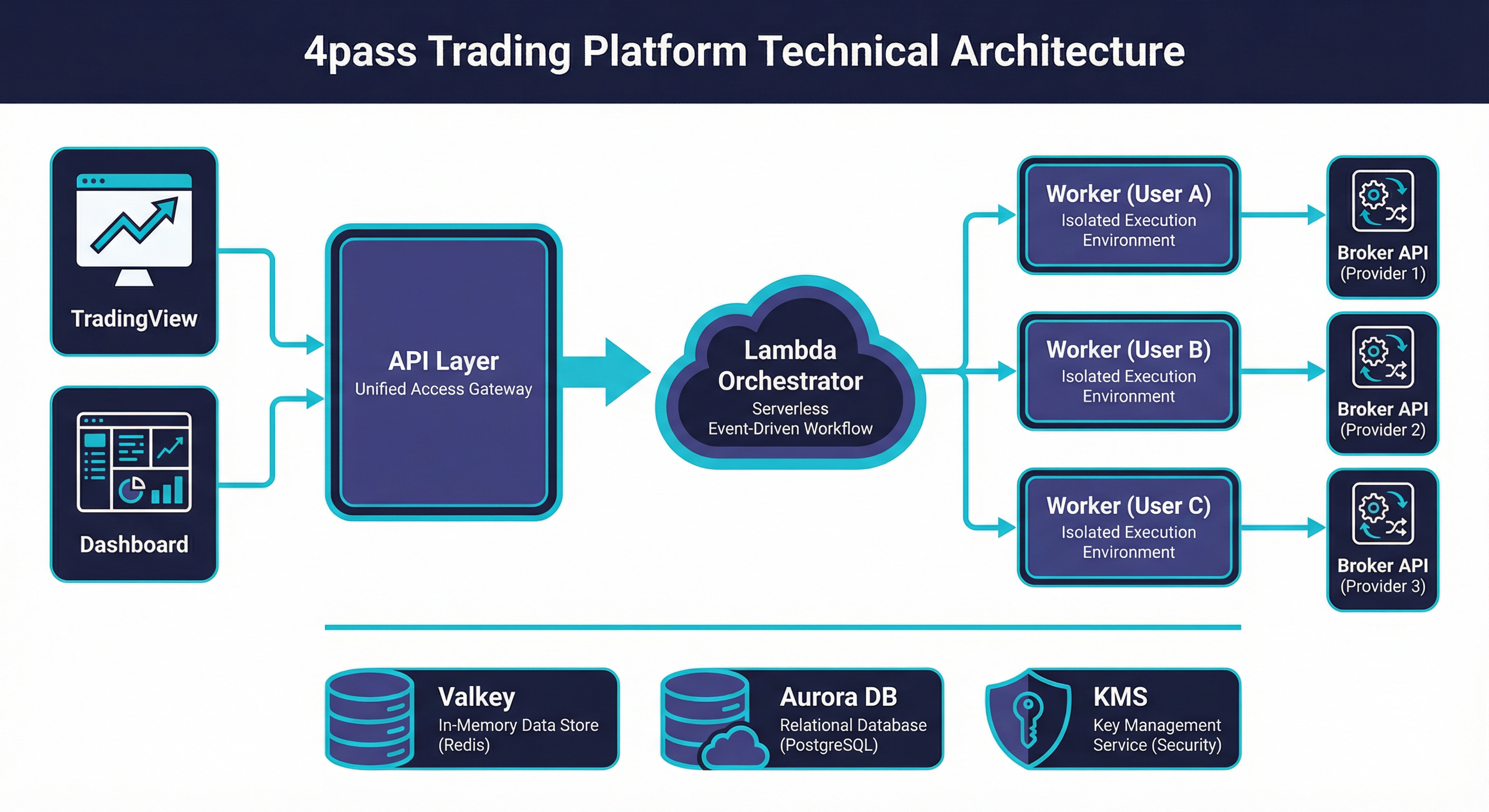

Architecture Overview¶

4pass is a production SaaS platform that bridges TradingView with multiple broker APIs, enabling automated order execution from Pine Script strategies. The system was designed from day one for horizontal scaling — every component is stateless, every bottleneck has a queue in front of it, and every scaling tier is a single Terraform variable change. Today it runs on AWS with per-user worker isolation, serverless orchestration, and a managed data layer that scales from a single user to 100,000+ with no architectural rewrites.

System Architecture¶

flowchart LR

subgraph clients["Clients"]

TV["TradingView"]

UI["Dashboard"]

end

subgraph edge["Edge"]

WAF["WAF + ALB"]

end

subgraph compute["API"]

API["FastAPI on ECS"]

end

subgraph queues["Queues"]

SQS["SQS FIFO x2"]

end

subgraph lambda["Orchestration"]

L["Lambda x5"]

end

subgraph workers["Workers"]

W["ECS Tasks<br/>Per-User Isolated"]

end

subgraph data["Data"]

Valkey["Valkey"]

RDS["Aurora"]

KMS["KMS"]

end

subgraph broker["Brokers"]

B["Shioaji · Gate.io"]

end

TV & UI --> WAF --> API

API --> SQS --> L --> W --> B

API --> Valkey & RDS & KMS

W --> ValkeyFor the full detailed architecture, see Compute & Orchestration and Data Layer.

Design Principles¶

| # | Principle | Implementation |

|---|---|---|

| 1 | Per-User Isolation | Every active user gets a dedicated ECS task. Broker sessions, credentials, and failures never cross user boundaries. |

| 2 | Serverless Orchestration | Five Lambda functions handle all control-plane work — worker lifecycle, order tasks, maintenance, pool management. No long-running orchestrator process to fail. |

| 3 | Managed Data Layer | ElastiCache Valkey Serverless and Aurora PostgreSQL with RDS Proxy. Zero node management, automatic scaling, built-in HA. |

| 4 | Defense in Depth | WAF → ALB → application-level validation (4 layers on webhooks) → per-user credential encryption with KMS. Every layer assumes the one above it has been compromised. |

| 5 | Infrastructure as Code | ~80 Terraform-managed resources across ECS, Lambda, SQS, VPC, IAM, CloudWatch. Every environment is reproducible from a single terraform apply. |

| 6 | Cost Optimization at Every Layer | EC2 capacity providers over Fargate (75% savings), bridge networking for density (30 tasks/instance), Valkey Serverless over provisioned (pay per ECPU), pool pre-warming over cold starts (4× faster). |

| 7 | Bridge Networking for Density | Workers use bridge mode instead of awsvpc, sharing the host ENI. This removes the ENI-per-task limit and enables 30+ tasks on a single EC2 instance. |

| 8 | Queue-Driven Everything | SQS FIFO between API and Lambda decouples request ingestion from processing. No direct Lambda invocations from the hot path — all work flows through durable queues. |

Component Summary¶

| Component | Technology | Purpose | Key Metric |

|---|---|---|---|

| Frontend | Vue 3 + Vite | Dashboard, strategy management, account settings | SPA served from FastAPI static mount |

| API | FastAPI on ECS (m6i.large) | REST endpoints, webhook ingestion, authentication | 8 Gunicorn workers, <50ms p99 for auth routes |

| Orchestrator — worker_control | Lambda (Python) | Start/stop/claim workers via SQS FIFO | 50–500 concurrency, 897ms median cold-to-ready |

| Orchestrator — order_tasks | Lambda (Python) | Background fill verification, order state management | 50–500 concurrency, 180s visibility timeout |

| Orchestrator — maintenance | Lambda (Python) | Fan-out coordinator for orphan detection | EventBridge every 60s, single invocation |

| Orchestrator — maintenance_worker | Lambda (Python) | Process individual orphan marks/tasks | 100–500 concurrency, parallel execution |

| Orchestrator — pool_manager | Lambda (Python) | Scale pre-warmed worker pool to target size | EventBridge every 5m |

| Queue — worker-control | SQS FIFO | Worker lifecycle commands with message dedup | Visibility 90s, DLQ after 3 retries |

| Queue — order-tasks | SQS FIFO | Fill verification and order processing | Visibility 180s, DLQ after 3 retries |

| Queue — pool-claim | SQS Standard | Assign pooled workers to users | Visibility 10s, 5min retention |

| Workers | ECS EC2 (r6i.large) | Per-user broker sessions, order execution | 30 tasks/instance, 64 CPU / 384 MB each |

| Cache | ElastiCache Valkey Serverless | Queues, heartbeats, sessions, rate limits, caches | Auto-scales 1 GB → 10 GB, 1K → 100K ECPU |

| Database | Aurora PostgreSQL + RDS Proxy | Users, accounts, orders, audit logs, sessions | Connection multiplexing, Multi-AZ ready |

| Encryption | KMS (RSA-4096) | Per-user credential encryption, HSM-backed | AES-256-GCM data keys wrapped with KMS master |

| Load Balancer | ALB + WAF | TLS termination, routing, rate limiting | Health checks every 15s, WAF 7 rules |

| Networking | VPC (2 AZ) | Public subnets for compute, private for data | Security groups enforce least-privilege |

Request Flow¶

A complete webhook order execution — from TradingView alert to broker fill:

sequenceDiagram

participant TV as TradingView

participant WAF as AWS WAF

participant ALB as ALB

participant API as FastAPI

participant Redis as Valkey

participant SQS as SQS FIFO

participant Lambda as Lambda

participant Pool as Pool Worker

participant Worker as User Worker

participant Broker as Broker API

TV->>WAF: 1. POST /webhook/tradingview

WAF->>ALB: 2. Pass (IP exempted, rules checked)

ALB->>API: 3. TLS terminated, forwarded

API->>API: 4. Validate (token → user → account → signal)

API->>Redis: 5. Check worker:active:{user_id}

alt No active worker

API->>SQS: 6a. Send to worker-control.fifo

SQS->>Lambda: 6b. Lambda triggered

Lambda->>Redis: 6c. Check pool availability

Lambda->>Pool: 6d. Send claim via SQS pool-claim

Pool->>Redis: 6e. Set worker mark (897ms total)

end

API->>Redis: 7. Push order to trading:user:{id}:requests

Worker->>Redis: 8. Pop order from queue

Worker->>Broker: 9. Execute order via broker API

Broker-->>Worker: 10. Order confirmation

Worker->>Redis: 11. Write response to trading:response:{req_id}

API->>SQS: 12. Queue fill verification (order-tasks.fifo)

SQS->>Lambda: 13. Lambda checks fill status

Lambda->>Redis: 14. Update final order stateStep-by-step breakdown:

- TradingView sends POST — Alert fires from a Pine Script strategy, payload includes the webhook token and signal data (action, symbol, quantity).

- ALB terminates TLS — ACM-managed certificate on the load balancer. Health checks run every 15 seconds.

- WAF checks rules — TradingView source IPs are exempted from rate limits. All other traffic passes through SQL injection, XSS, IP reputation, and rate limit rules.

- FastAPI validates (4 layers) — Token lookup → user resolution → trading account verification → signal parsing and normalization. Any failure returns early with an appropriate error.

- Check Redis for active worker — Look up

worker:active:{user_id}key. If present and TTL > 5s, worker is alive. - No worker? Start one (897ms) — API sends message to

worker-control.fifo→ Lambda picks it up → checks Redis for pool workers → sends claim viapool-claimqueue → pool worker receives, sets Redis mark. Total: 897ms median. FallbackRunTask: 3,659ms. Cold EC2: 45–60s. - Route order to Redis queue — Push structured order message to

trading:user:{user_id}:requestslist. - Worker pops and processes — Worker's event loop picks up the order within milliseconds via

BLPOP. - Call broker API — Worker uses its established broker session to place the order. Includes auto-reversal logic for position flipping.

- Response back via Redis — Worker writes the result to

trading:response:{request_id}with a 60s TTL. - Background fill verification — API queues a delayed check on

order-tasks.fifo. Lambda verifies the fill status with the broker 30–60 seconds later and updates the final state.

Why This Architecture¶

EC2 over Fargate — 75% Cost Savings¶

Fargate charges per-vCPU and per-GB at a premium. For worker tasks that need only 64 CPU units and 384 MB RAM, the Fargate overhead is enormous. A single r6i.large (16 GB, 2 vCPU) at ~$0.152/hr runs 30 workers. The same 30 workers on Fargate would cost ~$0.50/hr. At scale, this is the difference between viable and unprofitable.

Bridge Networking — 30 Tasks per Instance¶

The default awsvpc mode assigns one ENI per task, hard-capped at ~3 per large instance (minus the host ENI). Bridge networking shares the host's network stack, removing this limit entirely. The trade-off is no per-task security groups — but workers only need outbound internet access to broker APIs, so this is acceptable.

Lambda over EC2 for Orchestration — No SPOF¶

A long-running orchestrator process is a single point of failure. If it crashes at 2 AM, no workers start. Lambda functions triggered by SQS and EventBridge are inherently HA — AWS manages retries, concurrency, and availability. The orchestrator has zero operational burden.

Valkey Serverless — Auto-Scaling ECPU¶

Provisioned ElastiCache requires capacity planning and over-provisioning for peak. Valkey Serverless scales from 1,000 ECPU to 100,000+ automatically, billing only for consumed compute. During off-hours, costs drop to near-zero. During market open (thousands of simultaneous orders), it scales without intervention.

The Architecture Thesis

Every component was chosen to minimize operational toil at the current scale while preserving a clear upgrade path to the next order of magnitude. No component requires replacement to reach 100K users — only configuration changes.